A Structured Framework for Responsible Information Evaluation and Sharing.

Created by Jifry Issadeen

1. Abstract

The rapid expansion of digital communication and mass information exchange has transformed how individuals receive, interpret, and share information. While this has improved access to knowledge, it has also enabled the widespread circulation of misinformation, disinformation, propaganda, and emotionally manipulative content. These phenomena increasingly contribute to social polarization, conflict between communities, and erosion of trust.

The ENSURE Model is proposed as a structured, ethical, and human-centered framework that equips individuals with the skills to critically evaluate information before accepting or sharing it. Applicable to all forms of communication – verbal, written, digital, or broadcast – the model emphasizes intent analysis, content awareness, logical reasoning, emotional intelligence, source verification, and personal responsibility. ENSURE aims to reduce harm, encourage critical thinking, and promote constructive participation in society.

2. Introduction

Information today flows continuously through interpersonal communication, digital environments, broadcast systems, and written media. This constant exposure has reduced the time individuals spend reflecting on the accuracy, intent, and consequences of the information they encounter.

In many cases, misleading information is not shared due to ignorance alone. It is often intentionally designed to attract attention, generate financial gain, influence political or ideological outcomes, provoke emotional reactions, or create divisions among groups. Content that triggers fear, anger, pride, or hostility tends to spread more rapidly than balanced and factual information.

As a result, individuals are no longer passive recipients of information. Each act of sharing contributes to shaping public perception and social behavior. The absence of structured personal verification practices allows misinformation to propagate on a scale.

The ENSURE Model addresses this gap by offering a systematic approach that individuals can apply before accepting or sharing any information, regardless of its source or format.

3. Purpose and Scope of the ENSURE Model

The ENSURE Model is designed to:

- Apply universally to all information sources and formats

- Encourage deliberate pause and reflection

- Shift responsibility from systems alone to individual decision-making

- Reduce social harm caused by misinformation and manipulation

- Promote ethical information sharing as a civic responsibility

The model does not aim to suppress opinions or debate. Instead, it distinguishes between free expression and unverified or harmful propagation, fostering neutrality in evaluation rather than judgment on belief.

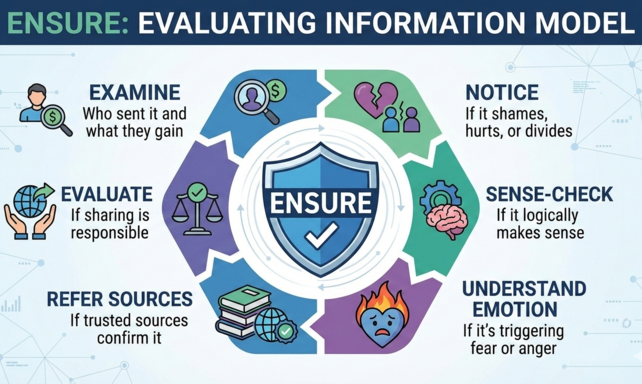

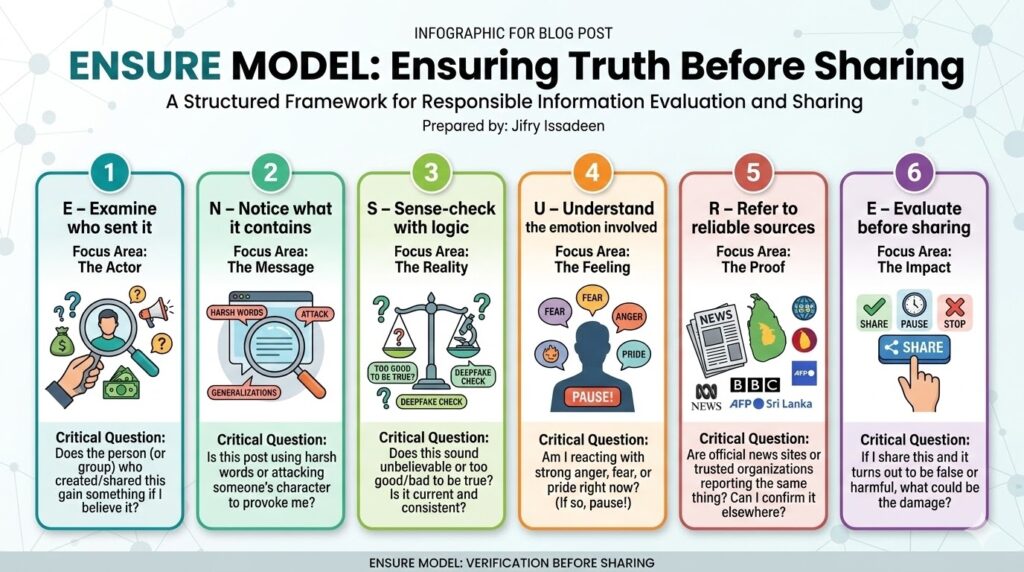

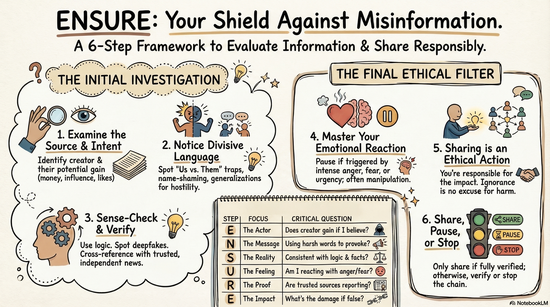

4. Overview of the ENSURE Framework

- E – Examine who sent it

- N – Notice what it contains

- S – Sense-check with logic

- U – Understand the emotion involved

- R – Refer to reliable sources

- E – Evaluate before sharing

Here’s a quick look at the ENSURE steps, designed to be clear and distinct:

| Step | Focus Area | Critical Question to Ask |

| E – Examine who sent it | The Actor | Does the person (or group) who created/shared this gain something if I believe it? |

| N – Notice what it contains | The Message | Is this post using harsh words or attacking someone’s character to provoke me? |

| S – Sense-check with logic | The Reality | Does this sound unbelievable or too good/bad to be true? Is the information current and consistent? |

| U – Understand the emotion involved | The Feeling | Am I reacting with strong anger, fear, or pride right now? (If so, pause!) |

| R – Refer to reliable sources | The Proof | Are official news sites or trusted organizations reporting the same thing? Can I confirm it elsewhere? |

| E – Evaluate before sharing | The Impact | If I share this and it turns out to be false or harmful, what could be the damage? |

Each step addresses a distinct dimension of information evaluation, ensuring clarity and non-overlap.

E – Examine Who Sent It

Definition

Examination focuses on the origin, intent, and incentive behind the information. It asks not only who shared the message, but why it was shared and who truly created it.

Key considerations

When examining the sender, individuals should consider:

- The identity and accountability of the source (is it a real person/organization, or an anonymous account?).

- The “Source of the Source“: If someone you know shared it, where did they get it from? (e.g., a friend, a group chat, a public page).

- Possible motivations, including:

- Attention or visibility (likes, shares, followers)

- Financial or material gain (monetization)

- Political or ideological influence (propaganda, election interference)

- Social validation or status

- Propaganda or narrative control

- Character attacks or reputation damage

- Deliberate creation of conflict or division

- Patterns of repeated behavior or agenda-driven sharing

Not all sharing is malicious; however, responsible examination requires awareness that benefit to the sender often explains the content being shared.

Practical action

- Ask what the sender (or the original creator) gains if the message spreads.

- Identify whether the sharing appears purely informational or has a strategic agenda.

- Treat credibility as low when incentives for manipulation are high.

- Question “trusted” forwarders: Even if a friend shares it, always consider the original source.

Early examination can eliminate unreliable content before further engagement.

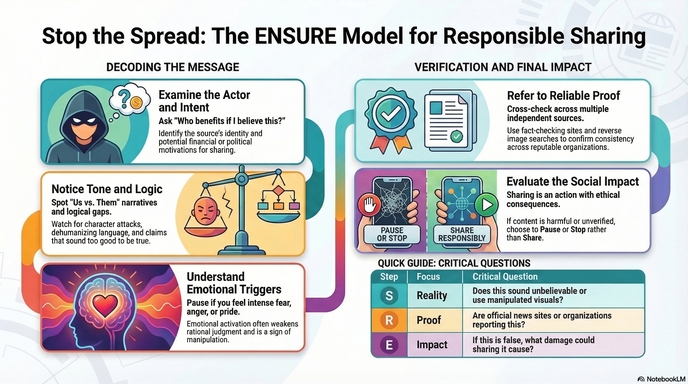

N – Notice What It Contains

Definition

Noticing focuses on the content itself, independent of who shared it. This step evaluates what the message is doing, not just what it claims.

Key areas to notice

- Whether the content is:

- Informative or opinion-based (clearly labelled as such)

- Balanced or one-sided (presenting only one perspective)

- Constructive or destructive

- Presence of:

- Name-shaming or character attacks

- Generalizations about groups (e.g., “all people from X are…”)

- Dehumanizing or stereotyping language

- Moral humiliation or ridicule

- The “Us versus Them” trap: Does the message try to divide people into opposing groups (e.g., “our community vs. their community,” “good vs. evil”)?

- Whether the message frames issues as absolute good versus absolute evil.

This step separates content harm from source intent.

Practical action

- Identify if the message attacks the dignity, fairness, or social harmony of individuals or groups.

- Recognize framing techniques that encourage hostility or division.

Avoid engaging with content designed to provoke rather than inform.

S – Sense-Check with Logic

Definition

Sense-checking evaluates whether the information is logically coherent, factually sound, and realistically possible.

Key considerations

- Does the claim align with basic logic, science, or established knowledge?

- Are facts exaggerated, oversimplified, or taken out of context?

- Are extraordinary claims supported by proportionate evidence?

- Is old or unrelated information presented as current?

- “Visual Logic”: If it’s a photo or video, does it look real? Are there any strange elements, unnatural movements, or inconsistencies that might suggest it’s AI-generated (a “Deepfake”) or digitally altered? (e.g., strange hands, uneven lighting, odd audio sync).

- Can you identify any logical fallacies (e.g., “everyone believes this,” “this caused that just because it happened after it”).

Logical inconsistency is one of the strongest indicators of misinformation.

Practical action

- Test the claim against common sense and known facts.

- Question improbabilities and absolute statements.

- Reject claims that rely on shock or emotional activation rather than clear explanation.

Examine images/videos closely for signs of manipulation or AI generation.

U – Understand the Emotion Involved

Definition

Understanding emotion focuses on recognizing psychological influence embedded in the message and how it affects your own reactions.

Key emotional triggers

- Fear and insecurity (e.g., “Your culture is under attack!”)

- Anger and resentment (e.g., “They are taking advantage of you!”)

- Pride or superiority (e.g., “Our group is the best, everyone else is wrong!”)

- Victimhood narratives (e.g., “We are being persecuted!”)

- Urgency: Does the message demand immediate sharing or action, leaving no time for thought?

Emotionally charged content weakens rational judgment and accelerates impulsive sharing.

Practical action

- Identify your own emotional reactions before responding. Take a deep breath.

- Pause when emotions are intense. Do not click, comment, or share while feeling strong anger, fear, or excitement.

- Remember that emotional activation often serves manipulation, not truth.

Ask yourself: “Is this post making me feel too strongly to think clearly?”

R – Refer to Reliable Sources

Definition

This step verifies information through independent confirmation from credible, trustworthy sources.

Key principles

- No claim should rely on a single source

- Reliable verification includes:

- Cross-checking with multiple, diverse, and credible news organizations (local and international).

- Consulting official or primary documentation (government reports, scientific studies, organizational statements).

- Checking for consistency across unrelated, reputable sources.

- Fact-checking websites: Utilize recognized fact-checking organizations in Sri Lanka (e.g., FactCheck.lk, AFP Sri Lanka) or international ones.

- Reverse Image Search: Use tools like Google Lens or TinEye to see where an image originally came from and if it has been used out of context.

- Repetition alone does not equal truth; popular doesn’t mean proven.

Practical action

- Cross-check key claims through credible, independent references.

- Reject information that cannot be independently confirmed by reliable sources.

- Avoid treating popularity (lots of likes/shares) as proof of truth.

- Learn how to use digital tools like reverse image search to verify visuals.

E – Evaluate Before Sharing

Definition

Evaluation emphasizes your ethical responsibility in the act of sharing. Sharing is not a neutral act.

Key responsibility principle

Sharing is an action with consequences. If information is false, misleading, or harmful, the individual who shares it becomes partially responsible for its impact, regardless of their intent. Ignorance is not an excuse for causing harm.

Practical action

- Assess potential harm before sharing. Ask: “Could this post cause division, anger, fear, or damage someone’s reputation if it’s false?”

- Choose consciously from three actions:

- Share: (Only if fully verified, constructive, and harmless).

- Pause: (If uncertain, take time to verify further, or simply ignore).

- Stop: (If harmful, misleading, or clearly false, do NOT share and consider reporting it).

- Restraint is a form of social responsibility. Your choice to not share unverified content protects society.

Remember: “Verifying before sharing protects society. Sharing without verification risks harming it.”

5. Conclusion

The ENSURE Model provides a structured and practical response to the challenges of misinformation, manipulation, and social division in the digital age. In an environment where attention and outrage can be monetized, ethical information behavior becomes a civic duty.

By applying ENSURE, individuals move from passive consumption to responsible participation, choosing verification over impulse and construction over destruction. This fosters critical thinking and builds a more resilient, informed society.

“Verifying before sharing protects society.

Sharing without verification risks harming it.”

ENSURE – Ensuring Truth Before Sharing

Prepared by: Jifry Issadeen